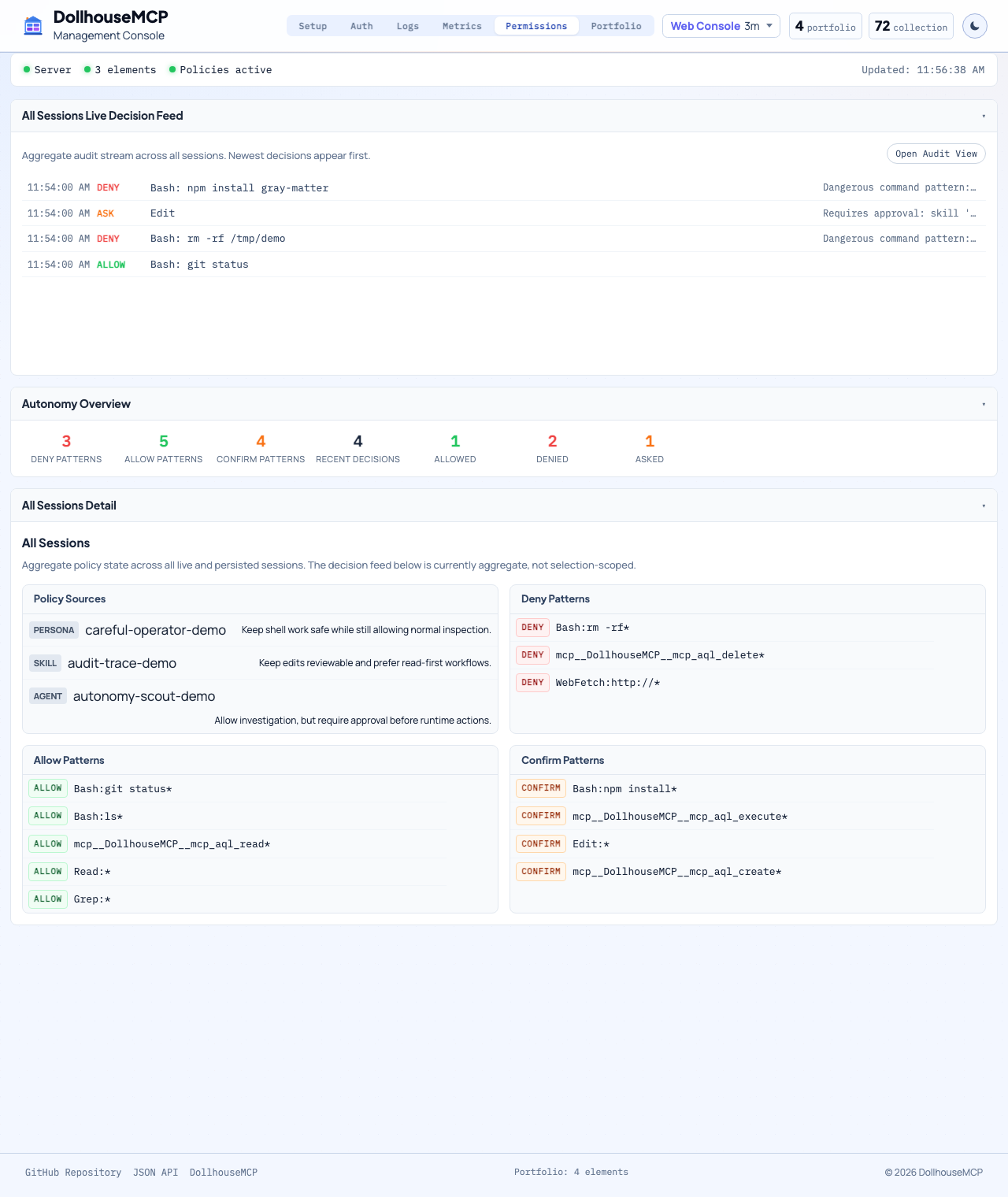

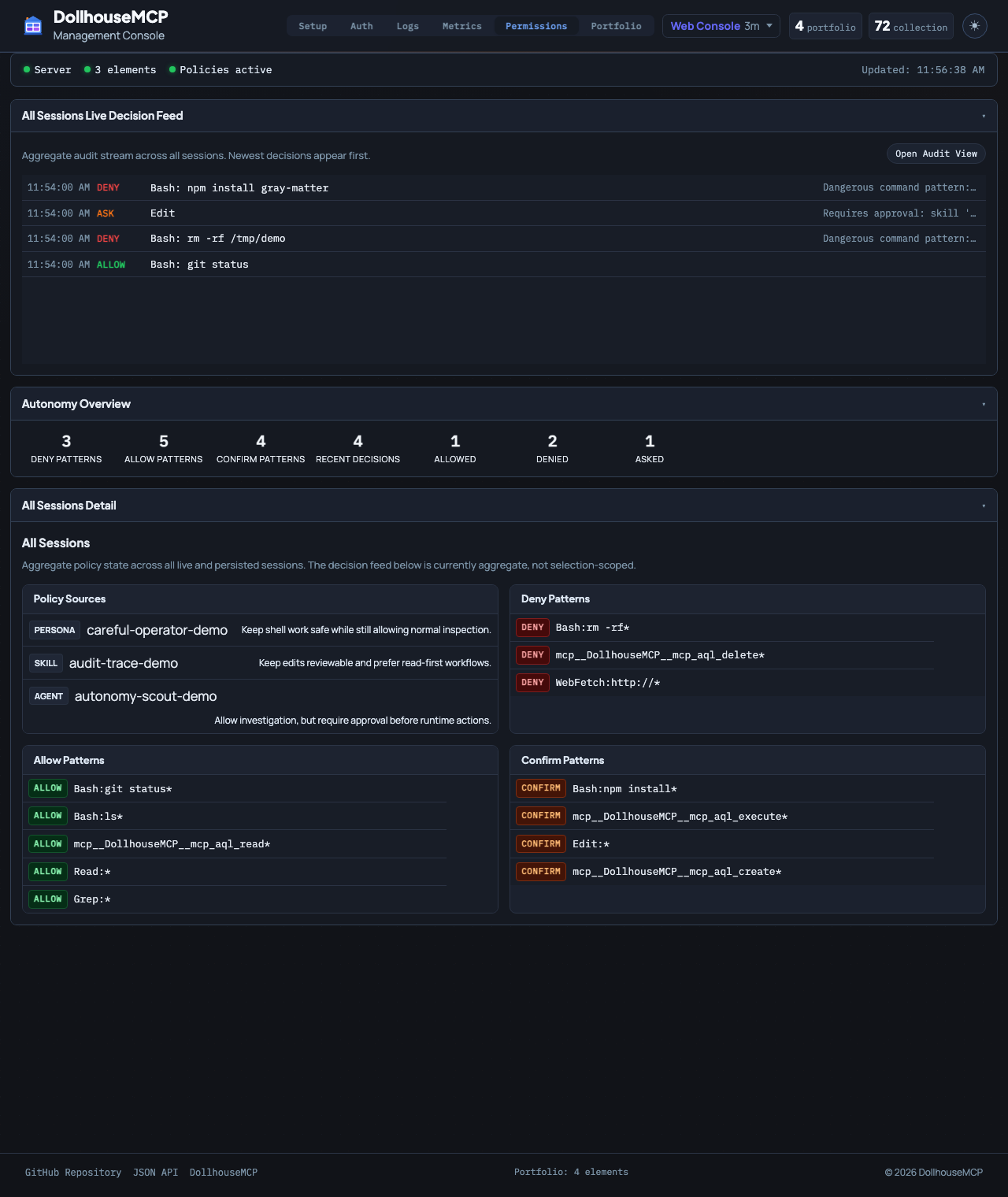

Agent Runtime

Autonomy with visibility, pause points, and hard limits.

Dollhouse Agents do not run in a black box. Every execution step passes through the server, through the Gatekeeper, and back through structured autonomy guidance so higher agency stays visible and bounded.

Gatekeeper checks policy

Every operation is evaluated against active element policies before it executes.

Autonomy evaluator scores the step

The runtime decides whether the agent should continue, pause for human input, or escalate.

Danger zone enforces hard blocks

High-risk actions like destructive commands or sensitive external operations can be blocked outright.

Step recording preserves an audit trail

Each decision and outcome can be recorded so execution is inspectable instead of opaque.

Runtime loop

1. Gatekeeper checks policy

2. Autonomy Evaluator scores the step

3. Danger Zone enforces hard blocks

4. The server executes or blocks

5. The LLM receives result + continue/pause/escalate guidance

6. The loop repeats until completion or pauseExecution operations

| Operation | Purpose |

|---|---|

execute_agent |

Start a new execution with goal state tracking. |

record_execution_step |

Append step results and receive autonomy guidance. |

complete_execution / abort_execution |

Finish or terminate the running execution explicitly. |

prepare_handoff / resume_from_handoff |

Serialize and resume execution state for handoff between sessions. |

confirm_operation / approve_cli_permission |

Handle gated actions that require explicit user approval. |

Why this matters

-

No silent escalation

The model does not get to quietly keep going without the server continuing to evaluate what it is doing.

-

Human input stays available

When autonomy should pause, the runtime has an explicit path to ask for guidance instead of guessing.

-

Permissioning and execution stay connected

Active elements can shape both the agent's behavior and its permission surface at the same time.

-

Runtime policy survives client permissiveness

Server-side enforcement still matters even when the MCP client itself is configured loosely.

The platform note from the server docs matters here too: the execution loop has been tested extensively on Claude Code and the DollhouseMCP Bridge, while the server-side enforcement model itself remains platform-independent.

This is the key shift from “AI agent” as a vibe to “AI agent” as a runtime with explicit policy, visibility, and recoverable state.